General Information

about Multiple Pipelines

about Multiple Pipelines

When you have lots of data and it turns out that it takes too long to process all these data on 1 computer after one another in series, it could be speeded up. First, these data are separated into several data sets. After that, all these data sets will be processed in parallel on several computers. These processes can be run in a gridcluster of computer or in a supercomputer.

These several separated data sets, which should be processed in parallel over several computers, can only be analyzed by using a multiple pipeline.

A multiple pipeline is a pipeline that generates other pipelines as jobs for other computers in a supercomputer or in a gridcluster of computers, where each job gets its specific task depending on the data it has to process.

A multiple pipeline consists of 2 subtypes of pipeline:

- Major pipeline

- Minor pipelines

Major pipeline:

The major pipeline is the pipeline that generates other so-called minor pipelines. It investigates all the data sets that has to be processed. For each specific data set, the major pipeline makes a minor pipeline as a job for the gridcluster of computers or the supercomputer. It is supposed that each minor pipeline will be submitted together with its data set that it has to analyze.

The major pipeline determines for each specific data set the settings, on what type of computer the data have to be analyzed. For example, the major pipeline will give a big data set a computer with high speed, much memory, more number of nodes and a long calculation time. On the other hand, the major pipeline will give a small data set a computer with a normal/lower speed, less memory, 1 or a few number of nodes and a relatively short calculation time. In that way, the data sets will be analyzed on the right computers in the gridcluster or supercomputer. It would insufficient to submit a relatively small data set on a very fast, big computer. The major pipeline prevents this insufficient distribution of jobs with minor pipelines.

To make submissions of these minor pipelines with its data sets possible, the major pipeline generates a so-called submit job script. In this submit job script, all the jobs - with its minor pipelines and its data sets - have been put in a queue. This submit job script will be submitted at the end. Then, the minor pipelines start running.

Minor pipeline:

A minor pipeline is a pipeline that has been generated by the major pipeline, and that runs on the computer in the gridcluster or supercomputer with right settings that it has got from the major pipeline to analyze its data set. Each minor pipeline has been situated in 1 job. So, if there 20 jobs running on a gridcluster, there are 20 minor pipelines; 1 minor pipeline in each job.

According to the settings, the minor pipeline will analyze its data on the right computer.

Overview:

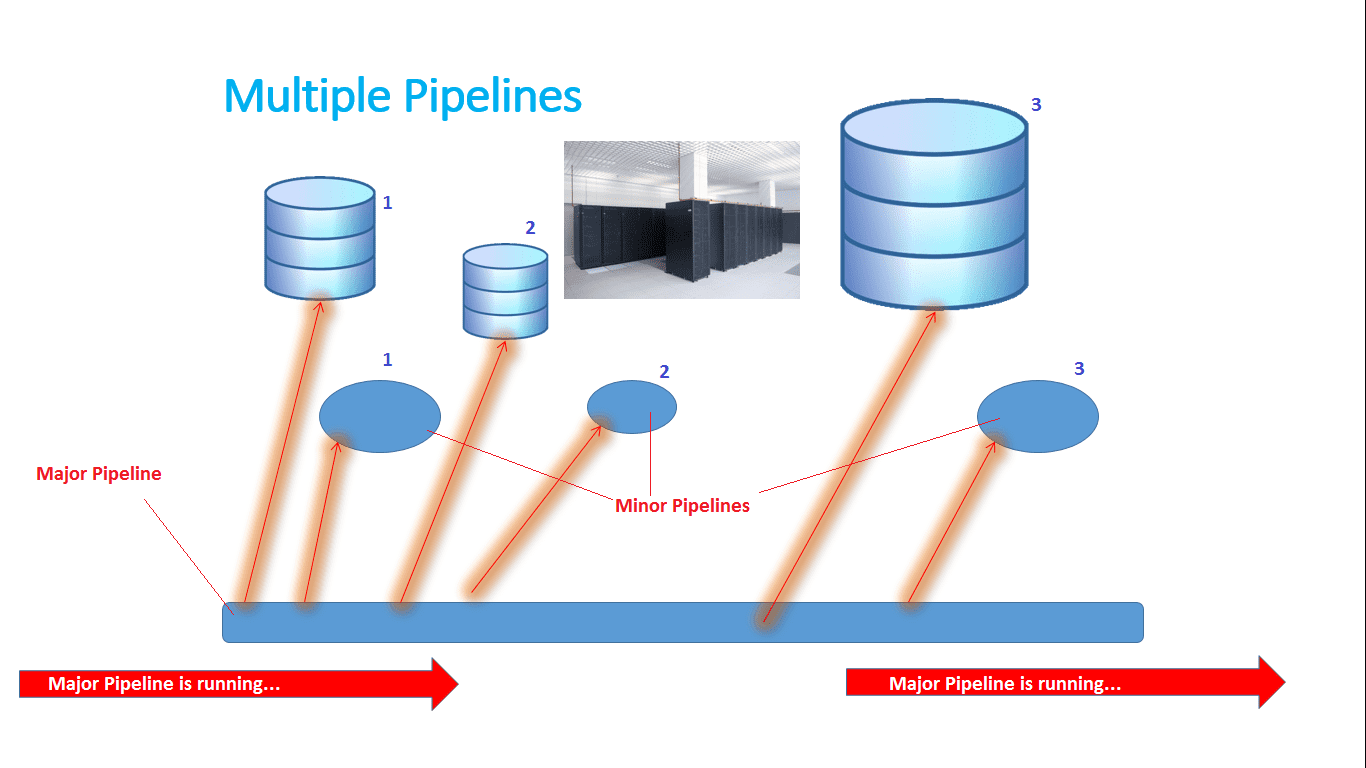

In figure ..., you can see an overview of how a multiple pipeline really works with its major pipeline in combination with its minor pipelines.

Figure ...: Overview of how a multiple pipeline works.

In this figure, you can see how a multiple pipeline works in combination with its minor pipelines. When the major pipeline is running, it is creating minor pipelines in jobs. According to the conditions of each data set, a special minor pipeline is created that is totally suited for analyzing its specific data set. Each minor pipeline is put into a job. Each job can be submitted,

In this figure, you can see how a multiple pipeline works in combination with its minor pipelines. When the major pipeline is running, it is creating minor pipelines in jobs. According to the conditions of each data set, a special minor pipeline is created that is totally suited for analyzing its specific data set. Each minor pipeline is put into a job. Each job can be submitted,

Figure ... shows how a multiple works. According to this figure, there will be explained what is happening.

The main task of the major pipeline is generating minor pipelines according to the data sets that have to be processed. In figure ..., you can see that there are 3 data sets. Data set number 1 represents a medium size data set. Data set number 2 represents a small data set. Data set number 3 represents a large data set.

When the major pipeline is started, scanning of the first data set begins. The major pipeline first scans data set number 1. The major pipeline determines what type of data it is, what size it has, calculates how much memory it needs for the analyses, calculates how long the calculation time will be, etc. Briefly, the major pipeline first determines the conditions (settings) for processing the data in that data set. These settings will be put in the header section of the job script of the minor pipeline. When the job is submitted, the job script and the minor pipeline in it will run on the very most suitable computer for processing and analyzing these data. This is also done with the data sets numbers 2 and 3. For these data sets, separate minor pipelines are generated with their own settings.

When the major pipeline has generated all the job scripts and minor pipelines, it will create a so-called submit job script. In this submit job script, each minor pipeline has been written as a job script and put into a queue. After that, the major pipeline has finished its job.

All the minor pipelines can be started now with this submit job script. This can be started by typing:

bash SubmitJobs.sh

bash SubmitJobs.sh

All the jobs are submitted then and put into the queue. When a computer is free again, the job with most suitable settings will be taken for processing its data set on that with its minor pipeline.

When all the minor pipelines did their jobs, all the data sets have been analyzed and processed. Mostly, all the results of the separate minor pipelines will be joined together. Therefore, a single pipeline could programmed for it.